The Control Layer Comes to Life

Why Agentic AI Is Finally Delivering

Why it matters: After years of experimental pilots and inflated promises, agentic AI is producing measurable results in production environments – but only where organisations embed it into tightly scoped workflows with proper governance. The difference between 3:1 ROI and wasted investment now hinges on architecture decisions being made this quarter.

From chatbot curiosity to workflow orchestration

Something shifted in the past twelve months. The AI conversation moved from “what can this chatbot do?” to “how do we orchestrate entire processes end-to-end?” The evidence is now impossible to ignore.

In oil and gas exploration, a platform called NeoSpatial uses large language models to translate natural-language objectives into sequences of geospatial operations, automating workflows that previously required specialist GIS expertise for every scenario.[5] In cardiac catheterisation units, an AI voice assistant named Sofiya completed 806 pre-procedural patient calls over ninety days, handling standardised instructions while nurses focused on complex clinical work.[7] At the Natural History Museum and Royal Botanic Garden Edinburgh, computer vision systems now flag imaging errors in herbarium digitisation at rates of 600 to 1,000 sheets daily, catching mistakes before they trigger costly re-digitisation.[3]

These are not laboratory demonstrations. They are production systems with quantified throughput, measured error rates, and documented governance frameworks.

The architecture that actually works

What distinguishes these successful deployments from the graveyard of abandoned AI pilots? Three architectural patterns appear consistently.

First, narrow initial scope. Sofiya handles pre-procedure calls – not general patient communication. NeoSpatial targets well placement planning, infrastructure routing, and basin evaluation – not open-ended exploration strategy. The NHM-RBGE system detects missing barcodes, cropping errors, and focus problems – not subjective curatorial judgements.[3][5][7]

Second, integrated measurement. Every successful case instruments outcomes from day one. The cardiac catheterisation study tracked call completion rates and patient satisfaction across the full ninety-day period.[7] Enterprise co-pilot programmes measuring task completion times, throughput, and error rates report ROI ranging from 3:1 to 10:1 – while those deploying without baseline metrics struggle to demonstrate value.[4]

Third, explicit governance guardrails. NeoSpatial operates within policy-driven constraints that ensure outputs respect exploration guidelines and regulatory requirements.[5] Radiology workflows embedding agentic AI define complexity thresholds for automation and retain human oversight for high-impact decisions.[6] The lesson is consistent: governance is not a constraint on value – it is a prerequisite for it.

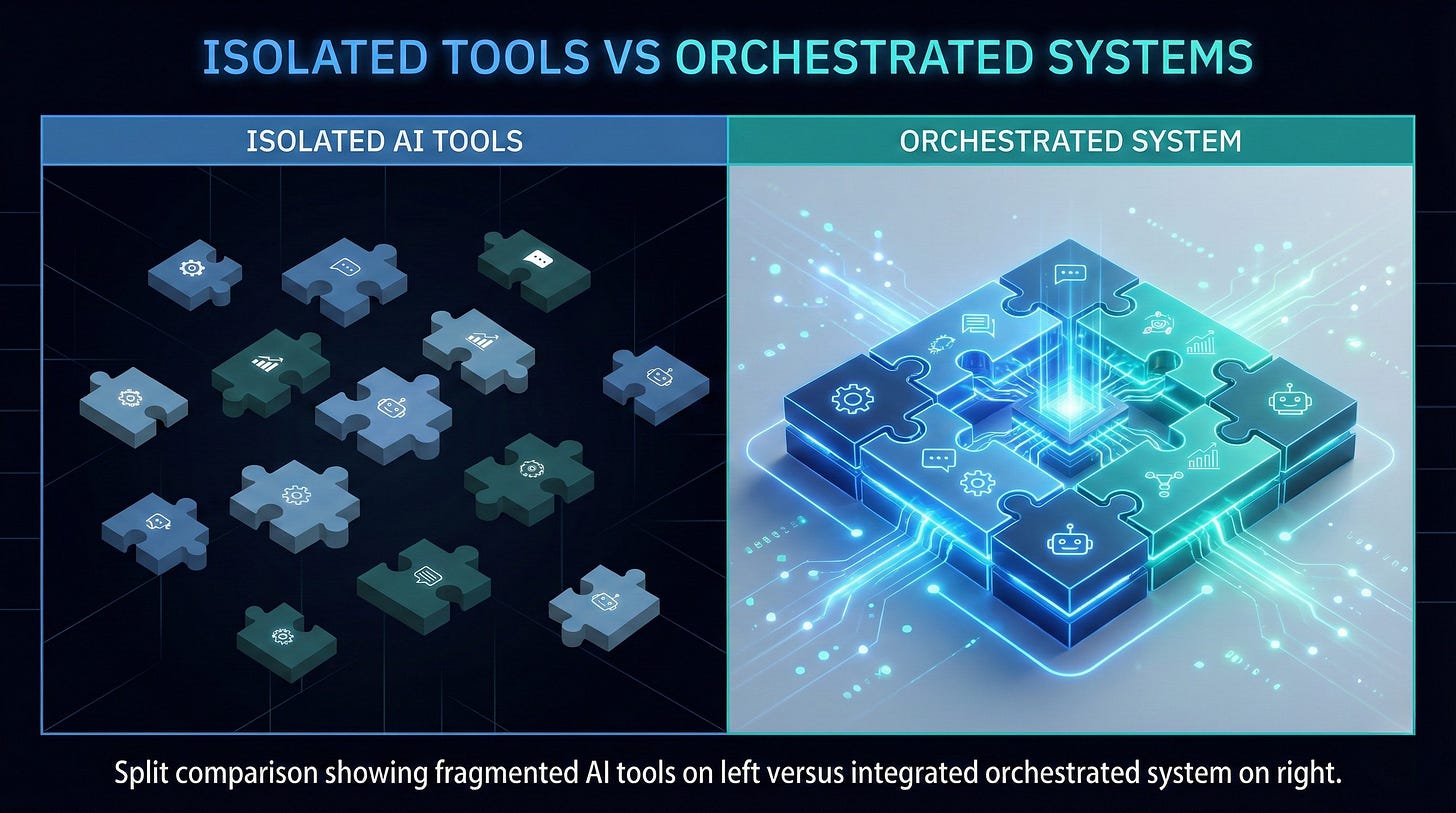

The 3.5x multiplier mid-market firms cannot ignore

Perhaps the most striking finding from recent enterprise deployments concerns how AI co-pilots are positioned within organisations. Firms treating co-pilots as orchestration layers connecting multiple systems – CRM, ticketing, development tools, analytics platforms – achieve approximately 3.5 times the productivity improvement of those rolling out isolated assistants.[4]

The mathematics are instructive. A standalone AI chatbot answering questions about company policy might save an employee five minutes per query. An orchestrated co-pilot that pulls customer history from one system, checks inventory in another, drafts a response, and logs the interaction produces compound efficiency gains across an entire workflow.

For mid-market organisations, this insight reframes the investment question. The cost of AI tooling matters less than the cost of not integrating it properly. A £50,000 co-pilot deployment that remains siloed may deliver less value than a £100,000 implementation wired into existing operational systems with clear measurement from the start.

The control layer insight: Organisations achieving the highest returns treat AI not as a tool to be added, but as an orchestration capability to be architected into existing workflows.

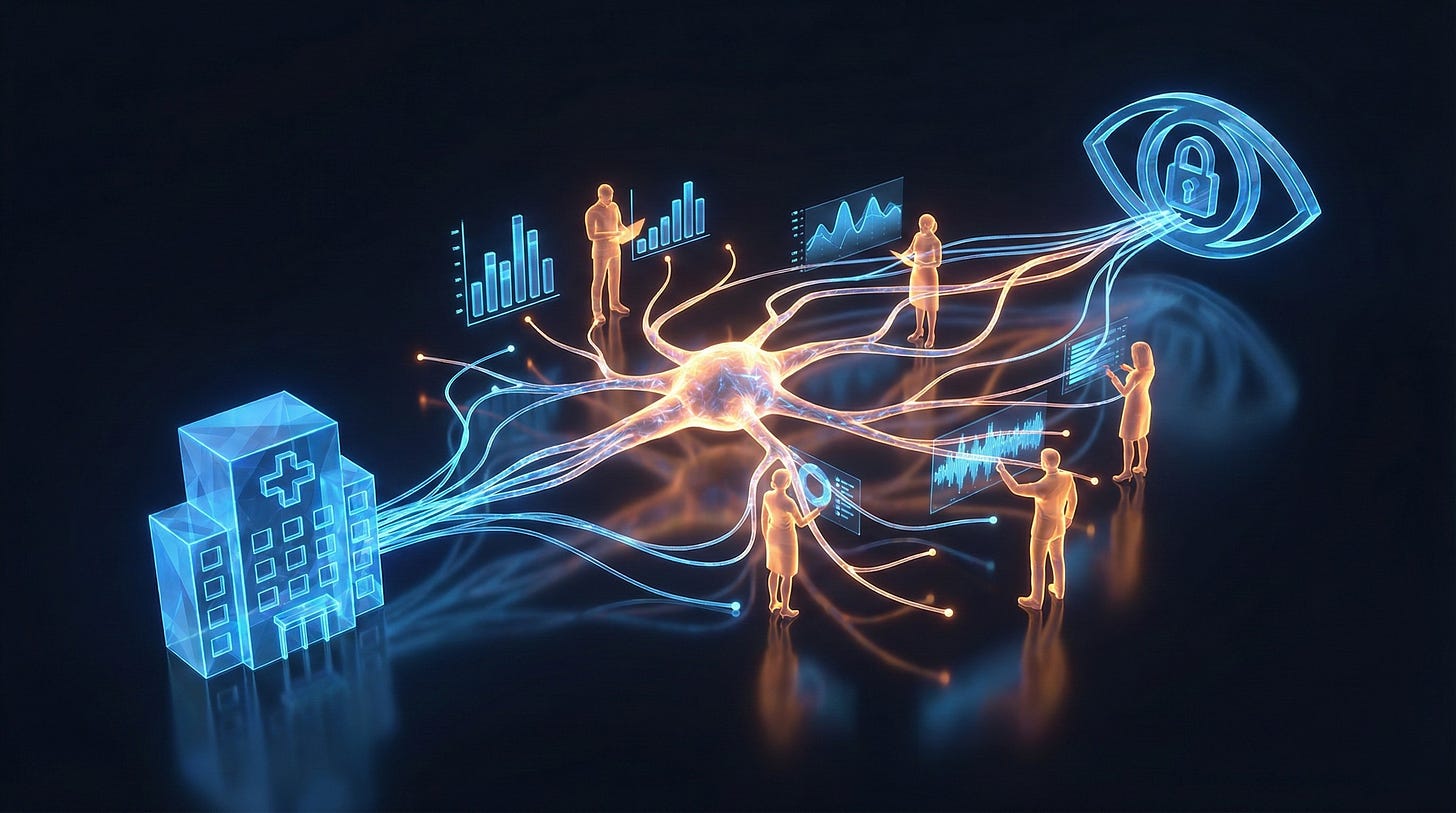

Healthcare as the proving ground

Healthcare offers the most instructive test case because the stakes are highest and the governance requirements most stringent.

The Sofiya deployment demonstrates what responsible automation looks like in practice. The AI voice assistant delivered standardised pre-procedure instructions, collected allergy and medication information, and answered common questions – but all transcripts were recorded and reviewed, with clear escalation paths where the assistant could not safely resolve queries.[7] Nurses remained firmly in the loop. The system handled routine communication; humans handled exceptions and clinical judgement.

Meanwhile, radiology departments are pushing further into multi-agent orchestration. A January 2026 review describes systems where multiple AI agents coordinate protocol selection before imaging, initial image analysis, tool invocation, and preliminary report generation.[6] These configurations outperform single-agent approaches on complex, multi-step processes and reduce manual interventions – but implementation requires defining complexity thresholds, ensuring economic sustainability, and building appropriate governance frameworks.

The pattern generalises beyond healthcare. Automation works when it addresses clearly bounded tasks with measurable outcomes and human oversight for edge cases. It fails when organisations attempt to automate judgement before they have automated routine.

Analysis

The shift from experimental AI to production agentic systems marks a genuine inflection point – but not for the reasons typically cited in technology commentary.

The underlying models have not suddenly become dramatically more capable. What changed is organisational maturity. The early adopters who invested in data infrastructure, workflow documentation, and governance frameworks three years ago now have the foundations to deploy orchestrated AI systems that actually deliver. Organisations that skipped those foundations are discovering that bolting AI onto broken processes produces broken AI.

This creates a widening gap. Firms with instrumented, governed workflows can layer agentic automation and measure results within quarters. Those without are stuck in pilot purgatory, unable to demonstrate value because they lack the baseline data to prove improvement.

For boards evaluating AI investments, the implication is uncomfortable but clear: the biggest barrier to ROI is not technology cost or model capability. It is the absence of the operational discipline required to deploy technology responsibly.

Risks and constraints

Three significant constraints warrant attention.

The measurement challenge cuts both ways. Organisations that instrument their workflows can demonstrate value, but they also expose themselves to evidence that AI investments are not delivering. Some leaders may prefer the ambiguity of unmeasured pilots to the accountability of documented results. This is a cultural problem that technology cannot solve.

Governance frameworks remain immature. While NeoSpatial and radiology deployments describe policy guardrails and complexity thresholds, industry-wide standards for agentic AI governance are still emerging. Organisations deploying today must develop bespoke governance approaches, with the attendant risk that their frameworks may not align with regulations that arrive later.

Talent constraints persist. Successful implementations require people who understand both the business domain and the technical architecture of agentic systems. The NeoSpatial case explicitly notes reduced dependency on scarce GIS specialists – but the platform itself required substantial expertise to build.[5] The skills gap has shifted, not disappeared.

What to do next

For boards and executives: Demand baseline metrics before approving AI investments. If your organisation cannot measure current workflow performance, it cannot measure AI-driven improvement. The first investment should be in instrumentation, not automation.

For technical leaders: Evaluate AI opportunities through an orchestration lens. Ask not “what can this tool do?” but “how does this integrate with our existing systems and data flows?” Prioritise implementations that connect multiple systems over those that operate in isolation.

For mid-market organisations: Start with your most clearly documented, highest-volume workflow. Scope tightly, measure rigorously, and build governance from day one. The 3.5x multiplier for integrated co-pilots suggests the competitive advantage lies not in being first to deploy AI, but in being first to deploy it properly.

Disclaimer: This article represents analysis based on publicly available information as of January 2026. It does not constitute legal, financial, or professional advice.

If your organisation needs support implementing AI governance frameworks that deliver measurable outcomes, Arkava helps mid-market enterprises turn AI investment into accountable results. Contact: engage@arkava.ai

Couldn't agree more. This really builds on your earlier piece about the challenge of moving past the initial AI hype cycle. It's so refreshing to see practicle application and tangible ROI, not just another PowerPoint full of 'someday' promises. Focusing on tightly scoped workflows and proper governanse is clearly the difference.