Who Really Controls Your AI?

The Sovereignty Question No One's Asking

Why It Matters

The moment a foreign government can unilaterally strip you of access to critical technology — not through technical failure, but through political decree — you don’t really own your AI infrastructure. You’re renting it at the pleasure of another nation’s executive office. On 27 February 2026, the Trump administration demonstrated this principle with surgical precision. And the UK, currently piloting Claude for GOV.UK services, is directly in the line of fire.

Join The Control Layer for weekly perspectives on AI, cybersecurity, and building technology that serves human purpose.

The Move: Federal Ban and Supply-Chain Designation

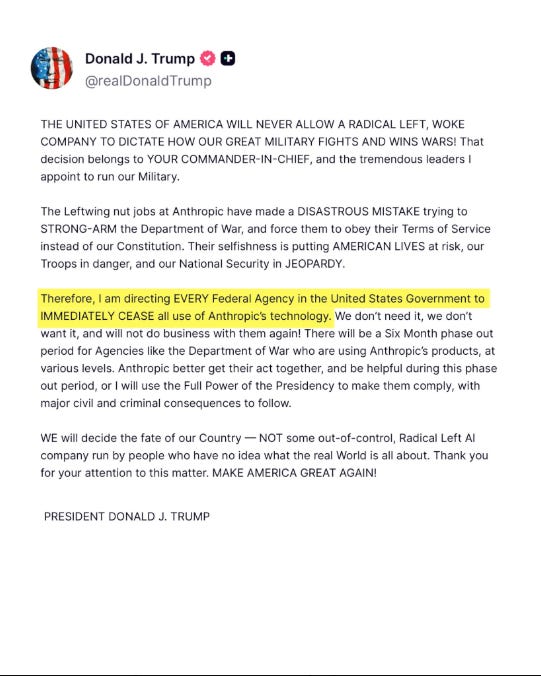

On 27 February, the Truth Social post landed like a photon torpedo on the AI industry’s hull. The language was blunt – “Leftwing nut jobs,” “Radical Left AI company” – and the directive was absolute: every federal agency was ordered to “immediately cease” using Anthropic technology. The Pentagon got a slightly gentler timeline – six months to complete phase-out – but the message was identical. Stop using Claude. Now.

This wasn’t a quiet policy memo. It came with teeth. And as shown in the post above, it came with the full theatrical force of presidential social media.

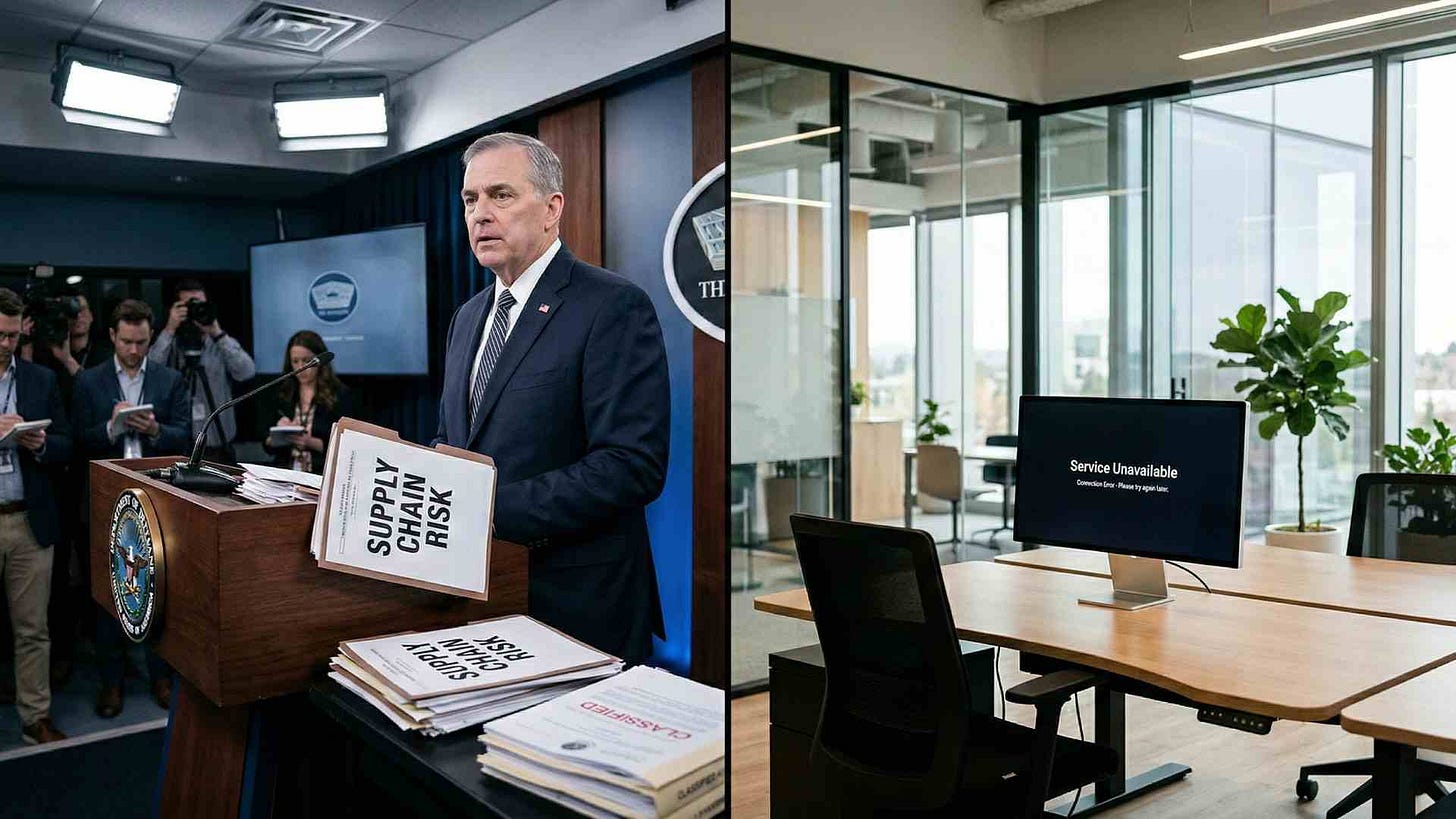

Hours later, Defence Secretary Pete Hegseth delivered the formal designation: Anthropic was a “Supply-Chain Risk to National Security.” That label — historically reserved for adversarial foreign entities like Huawei — triggers a nuclear option in military contracting. No US Department of Defense contractor, supplier, or partner may conduct any commercial activity with Anthropic. Full stop. Hegseth also threatened to invoke the Defence Production Act, a Cold War-era statute designed to conscript entire industries for national emergencies.

For context: Anthropic had just become the first frontier AI company to be granted access to US classified networks and National Laboratories. The company had built custom national security models. And it had offered to work directly with the Pentagon on R&D for reliability improvements. All rejected.

Why Anthropic Said No

On 26 February, CEO Dario Amodei published his company’s two red lines.

First: no mass domestic surveillance.

Second: no fully autonomous weapons systems.

These aren’t abstract principles. They’re hard limits on what Anthropic will build — and hard limits on what it will do for governments, even America’s.

The company made its position clear: it could have modified its safety guardrails to please the Pentagon. Instead, it chose to face the consequences of saying no.

This is crucial. Because within hours, the market provided a counter-example.

The Pivot: OpenAI and Grok Fill the Void

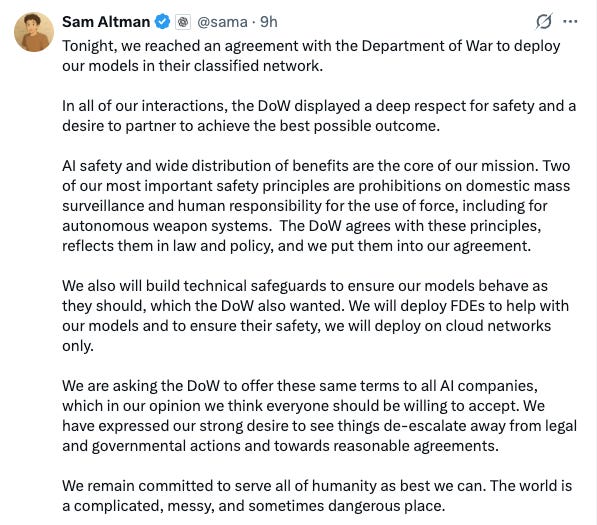

Sam Altman announced a Pentagon classified network deal for OpenAI on the very day the Anthropic ban dropped. The optics were exquisite – or catastrophic, depending on your view. But Altman made a calculated move: he posted OpenAI’s safety commitments publicly, as shown in his statement above. The company would maintain its prohibition on mass surveillance and autonomous weapons.

Altman wrote that the Department of War “agrees with these principles, reflects them in law and policy, and we put them into our agreement.” He also called on the Pentagon to offer the same terms to all AI companies – a not-so-subtle olive branch to Anthropic.

So why was OpenAI acceptable where Anthropic wasn’t?

The visible difference: OpenAI accepted a closer working relationship, agreed to cloud-only deployment, and offered to station Forward Deployed Engineers at Pentagon facilities. The cynical reading – and in my view, the more accurate one – is that OpenAI was willing to dress up the same red lines in more palatable language whilst maintaining a posture of cooperation rather than confrontation.

But Elon Musk’s xAI made no such rhetorical concessions. Grok signed a Pentagon agreement with a far looser standard: “all lawful use.”

That’s the escape hatch Anthropic refused.

It means whatever the US government deems lawful — today, or tomorrow, or in six months when a new administration changes the definition — is permitted.

The Message to the UK and Europe

Here’s what the UK government should notice: they piloted Claude for a service that guides citizens through government processes. An agentic system. Built on Anthropic’s technology. Anthropic works with the UK AI Security Institute. The company signed a memorandum of understanding with the UK government in February 2025.

As of 27 February 2026, that partnership exists in legal and operational limbo. The US doesn’t directly control UK purchasing decisions. But it controls whether Anthropic can legally serve UK clients — and whether US-based contractors the UK depends on can maintain relationships with Anthropic.

The CLOUD Act means US-headquartered companies are subject to US government orders regardless of where data is hosted. The Data (Use and Access) Act 2025 reinforced UK sovereignty requirements. Those two frameworks are now in direct tension. If a US court orders Anthropic to provide data or restrict access, the company has no good options: comply with America, or break UK law.

The EU faces the same structural problem, amplified. The European Union is >80% dependent on non-European digital infrastructure. The French and German Digital Sovereignty Summit in November 2025 was explicit: Europe is exposed.

Gartner forecasts that by 2027, one-third of enterprises will have moved to localised AI platforms — an explosion from today’s 5%.

And this week, Foreign Policy (A US magazine, founded in 1970, that analyses global affairs, international policy, economics and security) published a piece with a title that says everything: “Europe’s Digital Sovereignty Means Decoupling From U.S. Technology.”

The Trump administration just demonstrated why.

Analysis: Why This Isn’t a Simple Technical Toggle

In my view, the business logic here matters as much as the political logic – and reveals why Anthropic’s stance is rational, not merely idealistic.

Altering Claude’s constitutional AI – the set of principles guiding its behaviour – is not a simple toggle you flip like switching off the Death Star’s exhaust port vulnerability. It’s a significant technical and operational feat. Anthropic would need to retrain models, audit outputs, validate safety behaviours, and manage fallout across thousands of customers globally.

If the Pentagon had demanded Anthropic alter its safety stance specifically for US government use, Anthropic would have faced a choice:

accept the demand and incur retraining costs for a single customer, or

refuse and lose the entire US government contract.

More likely: Anthropic would have been forced to apply changes globally. It’s simpler — and cheaper — to alter Claude once than to maintain two separate versions of the same model. This means a decision made by the Trump administration could ripple through UK health services, French banks, German manufacturers, and Australian public servants.

This is the sovereignty trap in plain view. Think of it as the Kobayashi Maru of AI governance – a no-win scenario unless you’ve thought about it in advance.

Anthropic’s safety-first positioning has driven real enterprise growth. Insurance companies, healthcare providers, and financial institutions have chosen Claude because its constitutional principles are transparent and non-negotiable. That’s not just ethics. That’s a business differentiator in a market where clients are increasingly worried about being conscripted into surveillance architectures they never signed up for.

But it’s also a liability when the US government decides safety guardrails are inconvenient. Anthropic has built its entire go-to-market strategy around being the “safe AI.” Enterprise customers chose it precisely for the guarantees that the Pentagon now wants removed. Capitulating would likely cost Anthropic more in commercial revenue than the US government contracts are worth.

Risks and Constraints

For UK organisations: The GOV.UK pilot creates a direct dependency on a US-based company during a period of maximum political volatility. If the Trump administration escalates — threatening legal action against UK entities doing business with Anthropic, freezing assets, or pursuing diplomatic pressure — the UK government faces a choice between sovereignty and continuity.

For EU organisations: They face the same problem at scale. Companies with US operations can be squeezed through Commerce Department restrictions or OFAC sanctions. These aren’t hypothetical tools. The Trump administration has signalled willingness to use them.

For Anthropic itself: The company has legal grounds to challenge Hegseth’s designation. The Defence Secretary likely lacks statutory authority to unilaterally bar military contractors from all commercial activity with a US company. But litigation is slow. Market confidence is fast. Customers will hedge by diversifying away from Anthropic.

For the industry: The precedent is now set. Any frontier AI company can be designated a “supply-chain risk” by fiat. The category is newly weaponised.

What to Do Next

For UK Government and Organisations

1. Audit dependencies. Which critical processes depend on US-headquartered AI providers? Map your exposure systematically.

2. Develop redundancy. Don’t move everything to open-source alternatives overnight — that’s unrealistic. But ensure you have fallback options for mission-critical systems.

3. Strengthen data residency. If data is hosted in the UK, can it be legally accessed by US authorities? The answer may be yes. Design around it.

4. Engage in diplomacy. The US-UK relationship is strong enough to merit a conversation about technology infrastructure. Don’t wait for the next crisis.

For EU Organisations

1. Accelerate sovereign alternatives. The French government is investing heavily in European AI models. Support that ecosystem.

2. Assume worst-case scenarios. If a US provider is restricted tomorrow, what happens to your service? Plan for it.

3. Look to partnerships. Japanese, South Korean, and Canadian AI companies offer alternatives with weaker US entanglement.

For Anthropic and Other US Providers

The choice is now starkly visible: maintain safety principles and lose government contracts, or alter them and maintain access. Neither option is good. But the company has chosen the first, and that matters.

Timeline and Implications

This is an evolving situation. The Trump administration has a history of reversing course, negotiating, or softening positions through back channels. Anthropic has signalled it will challenge the Pentagon designation in court. The legal process could take months.

But the market has already moved. Grok and OpenAI have positioned themselves as available alternatives. Customers globally will begin hedging their bets. And governments that depend on US technology will accelerate their sovereignty agendas.

The UK’s GOV.UK pilot serves as a case study in miniature. If it survives this crisis intact, it demonstrates the strength of the US-UK relationship. If it’s disrupted, it becomes a cautionary tale about digital dependency.

Either way, the question is no longer theoretical: who controls your AI?

The answer, it turns out, can be changed by one person’s social media post.

Meanwhile, 573 Google employees and 93 OpenAI employees have signed a “We Will Not Be Divided” open letter supporting Anthropic’s position [14]. The workforce revolt suggests that even companies which accepted Pentagon terms may face internal pressure to maintain the same red lines Anthropic drew publicly.

The AI industry’s conscience, it seems, is distributed across thousands of engineers who’d rather not build Skynet on their lunch break.

Compliance Considerations

Organisations should:

Review vendor stability clauses and exit provisions in AI service contracts

Ensure contractual language permits migration to alternative providers without penalty

Document all AI system functionality to facilitate rapid transition if needed

Establish internal AI governance structures that don’t depend on external vendors’ policy decisions

Disclaimer: This article represents analysis based on publicly available information as of February 2026. It does not constitute legal, financial, or professional advice. The situation described is evolving rapidly and may have changed since publication.

If your organisation needs support implementing AI governance frameworks or assessing vendor dependency risks, Arkava helps mid-market enterprises turn AI investment into measurable business outcomes.

This is a developing story. The Trump administration has a well-documented history of reversing course on executive actions. The Control Layer will update as new information emerges.