When AI Hunts Its Own Bugs

What Claude's Firefox Discovery Means for Defensive Security

Why it matters: Frontier AI just proved it can find critical software vulnerabilities faster than human security researchers. At the same time, criminal groups are weaponising the same technology for autonomous attacks at machine speed. The organisations that harness AI for defence first will define the next era of cybersecurity. Those that wait will be defending yesterday’s perimeter against tomorrow’s threats.

Join The Control Layer for weekly perspectives on AI, cybersecurity, and building technology that serves human purpose.

The Discovery That Changed the Conversation

On 6 March 2026, Anthropic announced that Claude Opus 4.6 had identified 22 critical vulnerabilities in Firefox 148, including 14 classified as high severity [3]. This was not a theoretical exercise or a controlled benchmark. It was a structured security research engagement that validated Mozilla’s patch cycle and demonstrated something the cybersecurity community has debated for years: frontier AI models can perform productive vulnerability research at a speed and scale that human teams simply cannot match.

The significance extends beyond the headline number. Traditional vulnerability research relies on skilled humans spending weeks or months fuzzing code, reviewing logic flows, and testing edge cases. Claude completed its analysis using extended context capabilities processing up to one million tokens, effectively reading and reasoning across vast codebases in a single pass [3]. The model’s adaptive thinking and reduced hallucination rates (80% reduction compared to extended thinking baselines) meant its findings were not just numerous but reliable enough to action [6].

For UK mid-market organisations that cannot afford dedicated red teams or bug bounty programmes costing six figures annually, this development is not academic. It signals a future where AI-augmented security tooling becomes accessible, practical, and potentially transformative.

The Other Side of the Coin

Here is where the story gets uncomfortable. While Anthropic was demonstrating AI’s defensive potential, criminal ecosystems were scaling offensive AI capabilities at an alarming rate.

Flashpoint’s 2026 Global Threat Intelligence Report documents what it calls an era of “total convergence” in cybercrime [4]. The numbers demand attention: illicit discussions about AI and machine learning on underground forums surged 1,500% between November and December 2025, jumping from approximately 360,000 to 6 million messages [4]. This is not idle chatter. These communities are building, sharing, and refining autonomous attack frameworks that execute complete attack sequences without human intervention. Reconnaissance, phishing, credential testing, infrastructure rotation — all orchestrated by AI agents operating at machine speed.

The infostealer epidemic provides the fuel. In 2025 alone, 11.1 million devices were infected with credential-harvesting malware, yielding 3.3 billion stolen credentials and cloud tokens [4]. Ransomware incidents climbed 53% year-over-year, with 87% attributed to Ransomware-as-a-Service groups [4][15]. The tactical evolution is stark: attackers now prefer logging in with stolen credentials over breaking in through technical exploits. When your adversary already has the keys, your firewall is decorative.

Perhaps most concerning is the compression of exploitation timelines. The window between vulnerability disclosure and active exploitation has collapsed from weeks to hours, with high-impact flaws now weaponised within 4 to 8 hours of CVE publication [4]. This means traditional patch management cycles — where organisations review, test, and deploy fixes over days or weeks — are increasingly inadequate against adversaries operating at AI-accelerated tempo.

The Arms Race Nobody Can Opt Out Of

The cybersecurity landscape has split into two speeds: AI-accelerated offence operating in hours, and human-paced defence operating in weeks. That gap is where breaches live.

Think of it like this. Imagine a chess match where one player has a grandmaster AI whispering moves in real time, and the other is consulting a strategy book from 2019. That is roughly the asymmetry facing organisations that have not yet integrated AI into their defensive operations.

IBM X-Force researchers discovered over 300,000 ChatGPT credentials listed for sale on dark web marketplaces in 2025 [17]. Open-source AI agent platforms, designed for legitimate automation, are being repurposed as credential harvesting infrastructure. When compromised, these agent systems become goldmines of authentication tokens, API keys, and session data [17].

The World Economic Forum’s February 2026 threat assessment reinforces the picture: 87% of security leaders now identify AI-related vulnerabilities as the fastest-growing risk category, yet only 64% have adopted post-deployment AI security assessments [18]. That 23-point gap between awareness and action is precisely where organisational risk accumulates.

This is not a future problem. It is a current one accelerating weekly.

What Defenders Can Actually Do

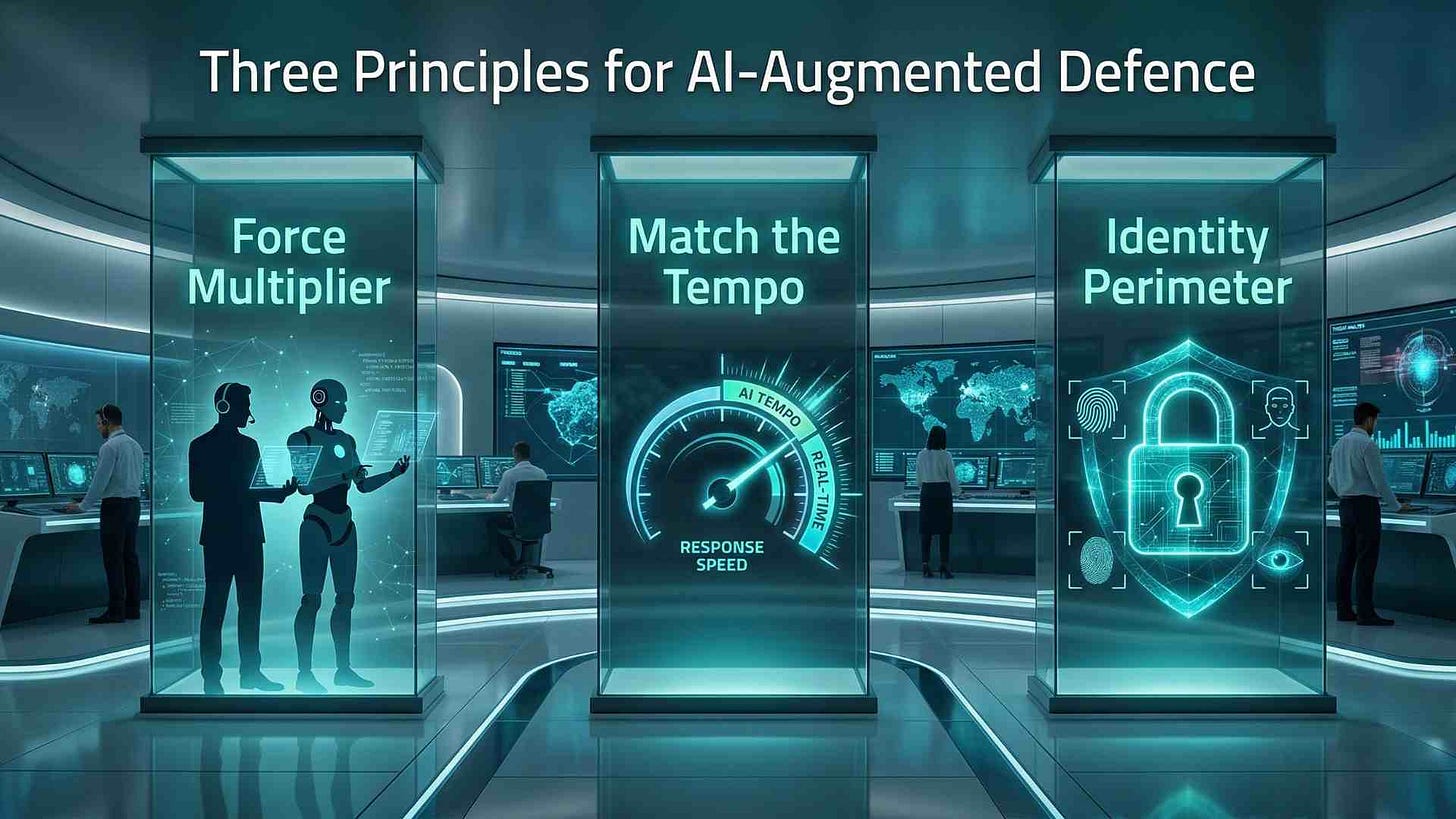

The Firefox discovery offers a blueprint, not just a headline. It demonstrates three principles that UK organisations of any size can begin applying.

Principle 1: AI as Force Multiplier, Not Replacement. Claude did not replace Mozilla’s security team. It augmented their capability, identifying flaws that could then be verified, prioritised, and patched by human engineers. The model brought scale and speed. Humans brought context, judgement, and accountability. This partnership model is how AI-augmented security will work in practice — not autonomous systems making unilateral decisions about your infrastructure, but intelligent tools that surface risks for human decision-makers to act upon.

Principle 2: Defensive AI Must Match Offensive Tempo. If attackers exploit vulnerabilities within hours of disclosure, defenders need detection and response capabilities operating on the same timescale. AI-powered vulnerability scanning, anomaly detection, and automated triage can compress the defender’s loop from days to minutes. The technology exists. The implementation gap is organisational, not technical.

Principle 3: Identity Is the New Perimeter. With credential theft dominating the attack landscape and ransomware groups preferring to log in rather than break in [4][15], traditional network security is necessary but insufficient. Multi-factor authentication, privileged access management, single sign-on hardening, and credential monitoring are no longer optional security enhancements. They are baseline survival requirements. AI-powered behavioural analytics can detect anomalous login patterns — a London-based finance director’s credentials used from an unfamiliar jurisdiction at 3am — in ways that static rules cannot.

Analysis

In my view, the Firefox vulnerability discovery represents an inflection point, though perhaps not the one most commentary has focused on.

The real story is not that AI found bugs. Security researchers have been using automated tools for decades. The story is the reliability and depth of what a general-purpose frontier model achieved without being specifically trained for security research. Claude Opus 4.6 was not a purpose-built vulnerability scanner. It was a reasoning model applied to a security context [3]. This generality matters enormously because it means organisations do not need to wait for specialised security AI products to enter the market. They can begin integrating existing frontier models into their security workflows today.

The flip side is sobering. If a commercially available model can find 22 critical flaws in a major browser, criminal groups with access to similar (or open-weight) models can do the same — and they are under no obligation to report their findings responsibly. DeepSeek V4, released as open-weight with one trillion parameters, provides capability that anyone can deploy without usage restrictions or ethical guardrails [1]. The democratisation of AI cuts both ways.

For UK mid-market organisations specifically, the strategic question is not whether to adopt AI-augmented security but how quickly they can do so without introducing new risks. Rushing to deploy AI tools without proper governance — clear access controls, credential isolation, activity logging, and incident escalation protocols — simply creates a different attack surface. The organisations that will navigate this transition successfully are those that treat AI security adoption as a governance challenge, not merely a technology procurement exercise.

Risks and Constraints

Hallucination risk remains real. While Claude Opus 4.6 demonstrated an 80% reduction in hallucination rates, that still means false positives will occur [6]. Security teams acting on AI-generated vulnerability reports without human verification risk wasting resources on phantom threats or, worse, introducing instability through unnecessary patches.

Asymmetric access to capability. Large enterprises can integrate frontier AI models into sophisticated security operations centres. Mid-market organisations with smaller teams and tighter budgets may struggle to operationalise these tools without external support or simplified platforms.

Regulatory ambiguity around AI in security contexts. The UK’s Data Use and Access Act 2025 permits automated decision-making with safeguards, but the boundaries of acceptable AI-driven security responses (automated blocking, credential revocation, incident escalation) remain unclear in practice [5]. Organisations deploying AI defensively should document their governance frameworks now, before enforcement precedents crystallise.

Open-weight model proliferation. DeepSeek V4’s open-weight release provides adversaries with frontier-class capability without commercial oversight [1]. Defensive strategies must assume adversaries have access to equivalent or superior AI tools.

What to Do Next

For boards and executives: Commission an AI security readiness assessment before Q3 2026. Understand where your organisation’s defensive tempo falls relative to the current threat landscape. Ask your CISO one question: if a critical vulnerability is published tomorrow morning, how many hours until we are patched? If the answer exceeds 24 hours, your patch management process needs AI augmentation.

For technical leaders: Begin piloting frontier AI models for internal vulnerability scanning and code review. Start with non-critical systems to validate reliability and build confidence. Simultaneously, audit your identity security stack — MFA coverage, PAM deployment, SSO configuration, credential monitoring. The shift from perimeter to identity-centric security is not optional.

For mid-market organisations: You do not need a dedicated AI security team to benefit from these developments. Evaluate managed security service providers that are integrating AI-powered threat detection. Prioritise identity hardening over network expansion. And establish clear governance for any AI tools touching your security infrastructure — access controls, logging, human oversight requirements, and incident response protocols.

Disclaimer: This article represents analysis based on publicly available information as of March 2026. It does not constitute legal, financial, or professional advice.

If your organisation needs support implementing AI-augmented security governance, Arkava helps mid-market enterprises turn AI investment into measurable security outcomes.

References

[1] Reuters. “DeepSeek to Launch New AI Model.” Reuters, 9 January 2026. https://www.reuters.com/technology/deepseek-launch-new-ai-model-focused-coding-february-information-reports-2026-01-09/

[2] AI Governance Desk. “EU AI Act Enforcement 2026: A CCO’s Complete Compliance Roadmap.” 14 January 2026. https://aigovernancedesk.com/eu-ai-act-enforcement-2026-cco-roadmap/

[3] The Hacker News. “Anthropic Finds 22 Firefox Vulnerabilities Using Claude Opus 4.6 AI.” 6 March 2026. https://thehackernews.com/2026/03/anthropic-finds-22-firefox.html

[4] Flashpoint. 2026 Global Threat Intelligence Report: Era of Total Convergence in Cybercrime. March 2026. https://www.techradar.com/pro/security/in-2026-cybercrime-has-reached-a-point-of-total-convergence-new-research-claims-ai-attack

[5] Osborne Clarke. “Artificial Intelligence | UK Regulatory Outlook February 2026.” 8 February 2026. https://www.osborneclarke.com/insights/regulatory-outlook-february-2026-artificial-intelligence

[6] Blog.mean.ceo. “New AI Model Releases News | March 2026.” 28 February 2026. https://blog.mean.ceo/new-ai-model-releases-news-march-2026/

[15] Convergence Networks. “Top Cyber Threats for 2026.” 18 February 2026. https://convergencenetworks.com/blog/top-cyber-threats-for-2026/

[17] IBM Security. “Cybersecurity Trends 2026: X-Force Threat Analysis.” 10 March 2026. https://www.ibm.com/think/insights/more-2026-cyberthreat-trends

[18] World Economic Forum. “Cyber Threats to Watch in 2026.” 17 February 2026. https://www.weforum.org/stories/2026/02/2026-cyberthreats-to-watch-and-other-cybersecurity-news/