What 100,000 Claude chats really tell us about AI productivity?

Inside Anthropic’s Claude data: where AI saves time, where it does not, and what that means for UK and EU leaders.

Why it matters

Anthropic analysed 100,000 real Claude.ai conversations and estimated that AI cut task time by about 80 per cent on average.

If you scale those gains across the US economy, they imply labour productivity growth of about 1.8 per cent a year for a decade, roughly double recent trends.

For the UK and EU, where productivity growth has bumped along at around 0.5–1 per cent a year since the financial crisis, that would be a revolution, not a rounding error.

The trick is turning these theoretical gains into practical change inside organisations without believing the hype or breaking the people.

The study in one paragraph

Anthropic used a privacy preserving method to sample 100,000 real Claude.ai conversations, asked Claude to estimate how long the underlying task would take a competent human without AI, then compared that to how long the user and Claude actually spent together. On average, the tasks would have taken about 1.4 hours of human time and about $54 worth of labour, and Claude cut the task time by around 80 per cent. Extrapolated to the whole US economy, assuming “universal adoption” over ten years, they estimate a 1.8 % annual boost to labour productivity driven mostly by software, management, marketing, teaching and customer service tasks.

This is not a prediction. It is a scenario based on how people currently use Claude. But it is a useful scenario if you care about AI strategy.

What the findings actually say

1. People already use AI for meaningful work

This is not a lab study where students write fake memos for beer money. These are real users doing their own work.

Claude is handling tasks such as:

Curriculum and lesson planning for teachers

Drafting legal and management documents

Financial analysis and memo writing

Software development, debugging and documentation

Across this mix, the median conversation represents work that would otherwise cost roughly 54 dollars in professional labour. That is not trivial. It is the sort of task that clogs knowledge workers’ days.

2. Time savings are large, but uneven

Anthropic’s model estimates:

Median time saving per task around 80–84 per cent

Some information heavy tasks, like compiling information from reports, hit 90–95 per cent

Others, such as checking diagnostic images, show only around 20 per cent saving

These numbers are bigger than the gains seen in controlled trials of generative AI for writing and customer service, which usually find productivity improvements in the 14–40 per cent range.

Why the gap? Because the Anthropic method looks only at the time visible in the chat window, not the additional human work to validate, edit, and implement the output. It probably overstates today’s end to end gains. But it does correctly highlight where AI is most powerful: reading, writing and data manipulation.

3. High wage, high complexity jobs see the biggest task sizes

The analysis links tasks to US occupational data. In Claude’s sample:

Management and legal tasks cluster around 1.8–2 hours of human work each

Education and arts/media tasks are around 1.6–1.7 hours

Food preparation, transport and basic maintenance tasks are closer to 20–30 minutes

Higher wage occupations also tend to have longer tasks in the sample. That makes sense: senior managers, lawyers or software engineers ask Claude to help with chunky problems, not just grammar fixes.

For boards and CFOs, this is a simple message: the largest potential gains are in high wage, information dense roles, not in frontline physical work. That is where your early AI investments should focus.

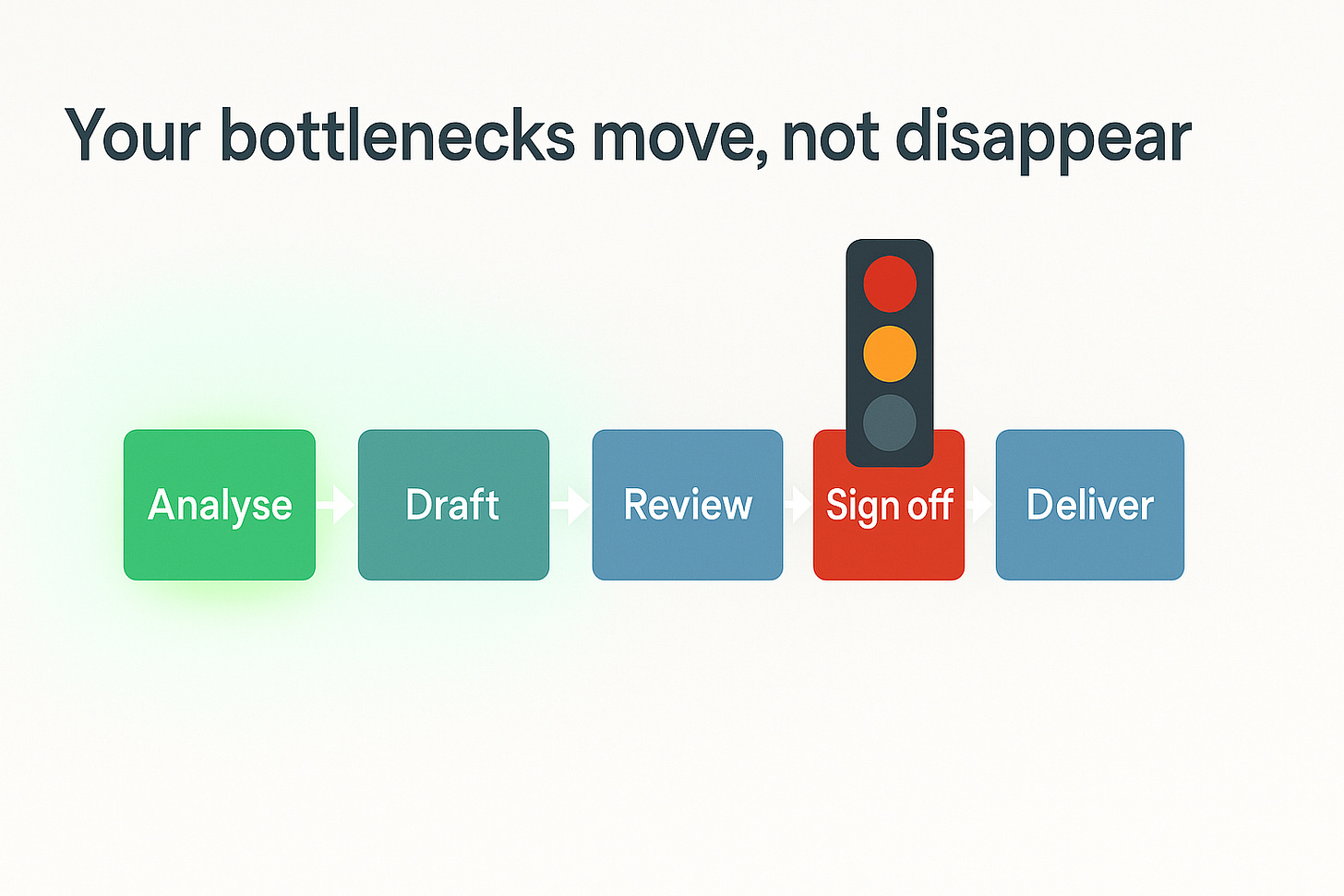

4. AI creates new bottlenecks

If you accelerate one part of a workflow, the slow bits elsewhere suddenly matter a lot more. Anthropic illustrate this clearly:

Software developers can speed up code creation, testing and documentation, but not supervising others or aligning on product decisions.

Teachers can accelerate lesson planning, but not managing behaviour or running extracurricular activities.

Customer service representatives can draft responses faster, but still need time for complex phone calls or complaint escalation.

Economists call this the Baumol effect: growth ends up constrained by the tasks that are essential but hard to automate.

In practice, that means a future where your engineers ship code faster but wait just as long for security review, compliance sign off and change approvals. Unless you redesign the process, AI will pour productivity into the sand.

How to use these findings inside an organisation

Here are the practical lessons I would take from this work.

1. Design around tasks, not job titles

Anthropic build their analysis on task taxonomies such as O*NET, not on generic job descriptions.

O*NET is the United States’ free online database of occupational information covering over 900 job profiles and 55,000 jobs, providing comprehensive data on skills, knowledge, abilities, tasks, and requirements for each occupation.

Pick a high value role such as software engineer, project manager, lawyer or finance business partner.

List their recurring tasks for a typical month.

For each task, estimate:

Time per occurrence

Frequency

Current pain points

Then ask two questions:

Can AI help with this task directly?

Would that materially change the economics of the role?

You will quickly see a pattern: a small set of reading, writing and analysis tasks eats a lot of time and is very AI friendly. That is your first pilot wave.

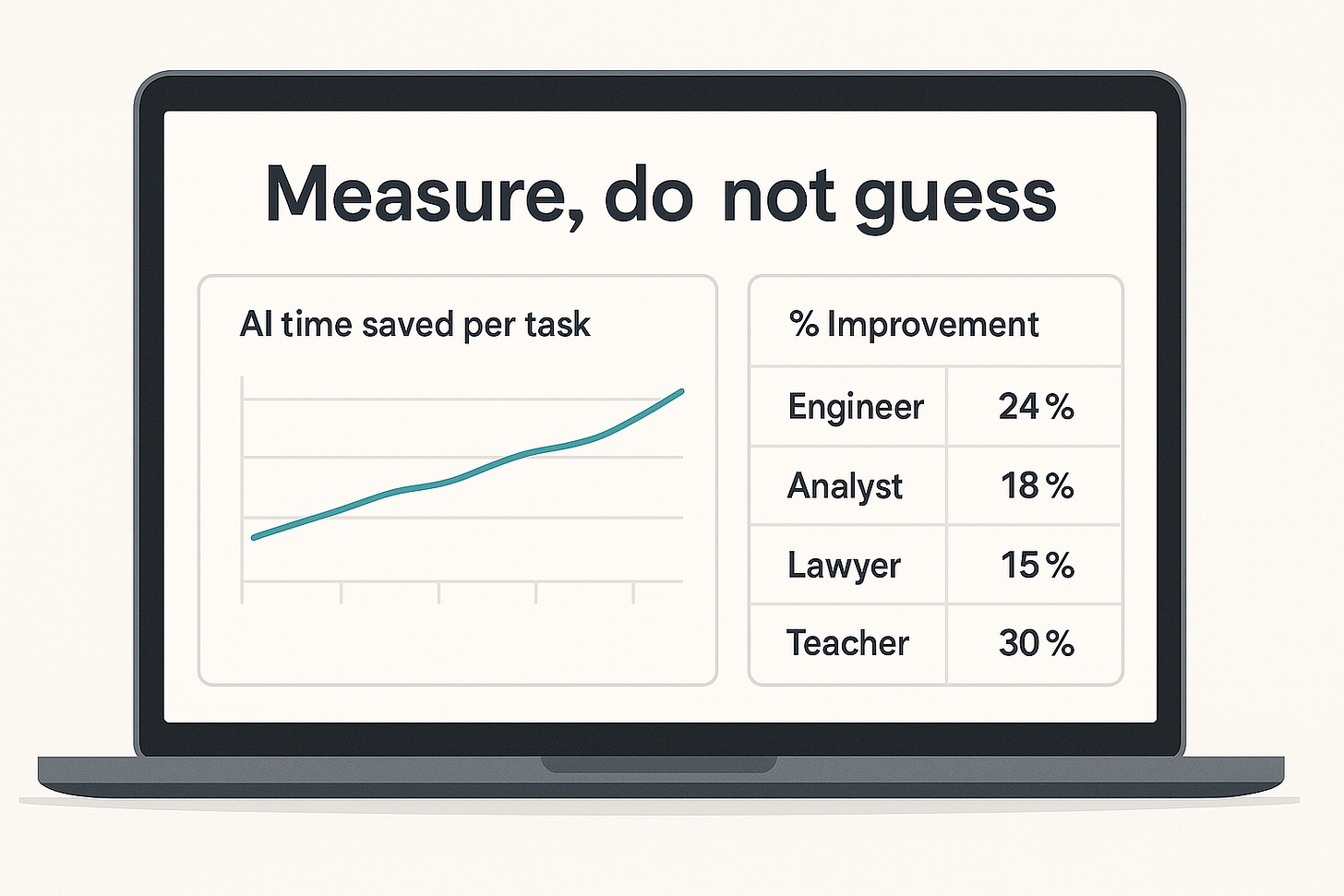

2. Build a simple internal “AI Economic Index”

Anthropic are trying to track productivity at the model level. You can steal the idea for your own estate.

A minimal internal index:

Track a small cohort of knowledge workers across a fixed period.

For a chosen set of tasks, ask them to log:

Time taken without AI (historical or estimated)

Time taken with AI assistance

Quality rating compared to their usual standard

Use timesheet data or ticketing systems where you have them, do not rely only on vibes.

You do not need perfect econometrics. Even directional data on ten common tasks will show whether the gains you see resemble the 20–40 per cent RCT (Randomised Control Trial) world or the 80 per cent chat window world.

That is enough to prioritise investment and push back on vendor fairy tales.

3. Treat AI time estimates as scaffolding, not gospel

Anthropic validate Claude’s time estimates against real JIRA data for software teams. Humans estimating their own tasks hit a correlation of about 0.5 with actual completion times. Claude manages about 0.44, which is similar in direction but compressed: it overestimates short tasks and underestimates long ones.

So:

You can use model based estimates to compare tasks to each other.

You should not use them alone to sign off a business case or decide headcount.

A sensible move is to ask both humans and AI to estimate task time, then compare that to real tracked data every quarter. If the model proves consistently biased, you correct for it.

If it proves better than your managers at estimating work (which is not a high bar), you have gained a surprisingly useful planning tool.

4. Plan explicitly for bottlenecks

The report’s biggest insight is not the headline 1.8 per cent productivity gain. It is the observation that some tasks barely move.

If AI turns a three hour analysis into a 30 minute one, but your weekly steering committee still meets once a fortnight, the delivery speed of the whole system has not changed. You just have more polished decks on the shelf.

So for each high gain task, ask:

What upstream work constrains throughput?

What downstream approvals, handoffs or audits slow us down?

Can AI assist there as well, or do we need process change and delegation?

This is where boards and regulators matter. Europe already has a decade long productivity problem.

If we add AI to existing bureaucracy without changing any rules, most of the value will evaporate into email chains and committees.

5. Put quality and risk on the same footing as time

Randomised trials tend to find that generative AI does two things at once: it speeds people up and raises average quality, especially for less experienced workers.

The Anthropic study focuses on time, not quality. That is understandable, but risky to copy inside a regulated firm.

Practical guardrails:

For each pilot, define a baseline quality metric: defect rate, escalation rate, complaints, rework.

Require that any AI time saving is at least quality neutral, and ideally quality positive.

Hard rule: in safety critical or high stakes contexts (healthcare decisions, legal conclusions, critical infrastructure), AI output must be reviewed by a qualified professional until regulators say otherwise.

The aim is not to turn professionals into rubber stamps. It is to use AI as a force multiplier on judgement, not a replacement for it.

What this means for UK and EU leaders

The UK has had weak labour productivity growth since the 2008 crisis, hovering around 0.5 per cent a year. The EU has seen similar stagnation, with post-crisis productivity growth roughly half its pre-2007 pace.

If analysis like Anthropic’s is even directionally right, current generation AI could provide a one to two percentage point uplift in labour productivity over a decade if widely adopted and properly integrated. That would not fix everything, but it would materially shift wage growth, tax receipts and fiscal space.

The catch is that these gains will not appear automatically, and they will not be evenly spread:

Software, professional services, education and central functions will see big gains.

Physical, care and frontline work will change more slowly.

The real limiter may be organisational willingness to redesign processes, job roles and accountability.

Governments and regulators have a part to play too. They need to set clear guardrails for safety, transparency and accountability without raising compliance costs so high that only US hyperscalers and the very largest incumbents can afford to play. That balance is still a work in progress under the EU AI Act and emerging UK regulation.

What to do next

For boards, CIOs and transformation leaders, here is a simple action list:

Run a task level inventory for two or three target roles, focusing on high wage, document heavy work.

Pilot AI on a defined set of tasks and measure time and quality, not just anecdotal excitement.

Build a basic internal AI productivity index, updating quarterly, so the conversation with finance is evidence rather than faith.

Identify and fix bottlenecks where faster analysis still sits inside slow governance or legacy processes.

Invest in AI literacy and workflow design, not just licences, so people can actually translate these model level gains into business outcomes.

Stay honest about limitations: today’s models are powerful and improving quickly, but they are not magic, and your organisation is not suddenly exempt from basic economics.

Used wisely, studies like this one are less about the exact number and more about the direction of travel. They tell us where AI is already strong, where it is weak, and where leaders should stop dreaming about “AI strategy” and start fixing the plumbing of work itself.

References

[1] Anthropic, Estimating AI productivity gains from Claude conversations, November 2025.

[2] Noy, S., Zhang, W., Experimental evidence on the productivity effects of generative AI for professional writing tasks, Science, 2023.

[3] Brynjolfsson, E. et al., Generative AI at Work, Quarterly Journal of Economics, 2025; plus Stanford/MIT customer service RCT summaries.

[4] Acemoglu, D., The Simple Macroeconomics of AI, MIT working paper, 2024.

[5] Filippucci, F., Gal, P., Schief, L., Miracle or myth? Assessing the macroeconomic productivity gains from AI, OECD, 2024–2025.

[6] METR, Measuring AI Ability to Complete Long Tasks, 2025.

[7] ONS, Labour productivity statistics and House of Commons Library briefing SN02791, 2025.

[8] CaixaBank Research, Productivity growth in Europe: low, uneven and slowing, 2024; OECD, Strengthening productivity and the Single Market, 2025.