Claude Code Gets Smarter — and Safer: Anthropic’s New AI Security Layer Could Redefine How We Build Software

The race to integrate AI into software development just took a significant turn. Anthropic — the AI safety-driven company behind the Claude family of models — has introduced automated security review capabilities for its AI coding assistant, Claude Code. This move positions Anthropic as a front-runner in addressing a growing and often under-acknowledged risk: AI-generated vulnerabilities slipping into production code.

With AI coding assistants already changing how engineers write, refactor, and ship code, Anthropic’s timing is strategic — and possibly crucial. While competitors tout speed and automation, Anthropic appears intent on making security the non-negotiable foundation for AI-assisted development.

The Announcement: Security as a First-Class Feature

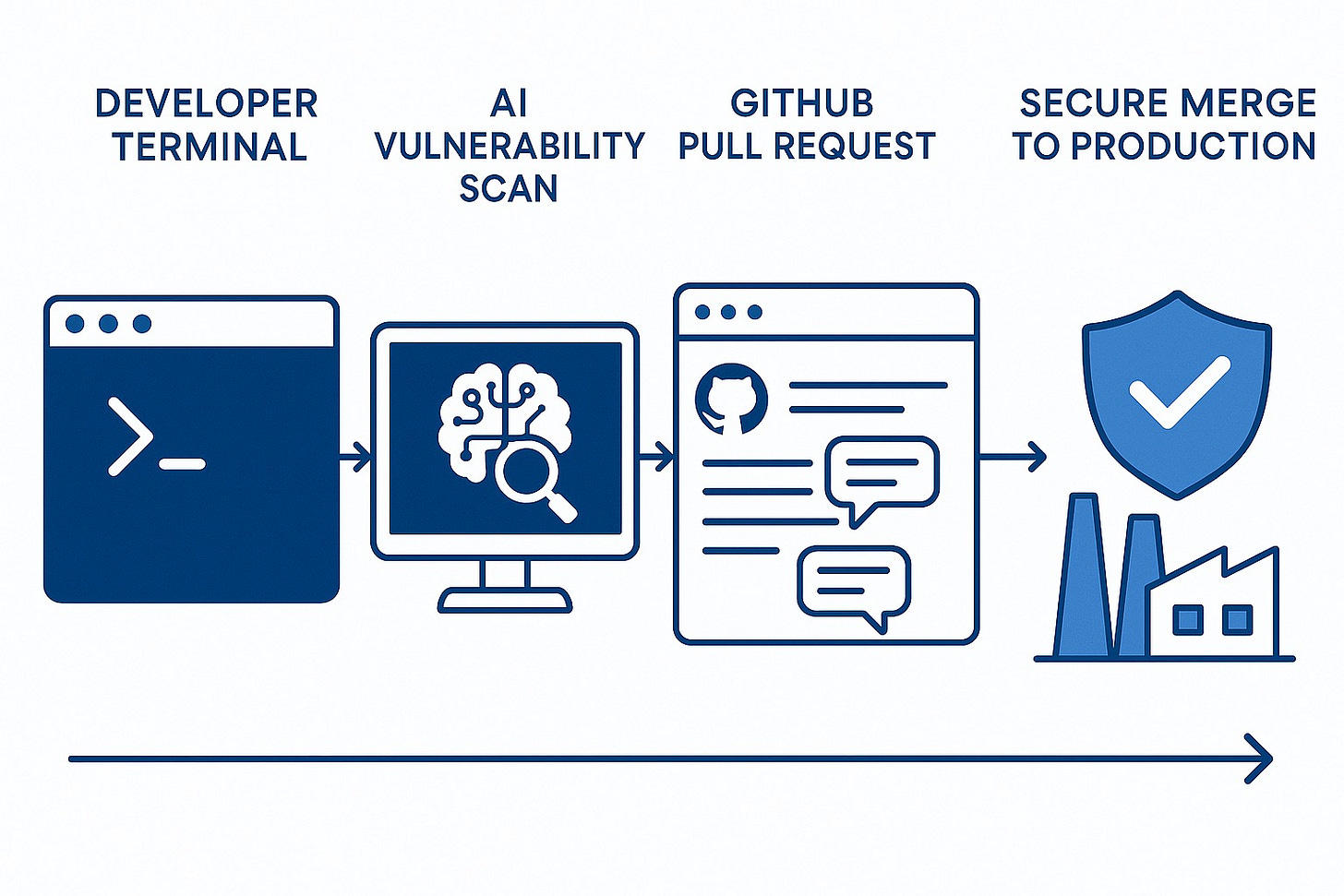

This week, Anthropic unveiled a new /security-review command and deep GitHub Actions integration for Claude Code. Together, they allow developers to spot vulnerabilities and receive actionable fixes without leaving their workflow.

In practical terms, this means:

From the terminal: Developers can trigger a security scan on-demand before committing code, catching issues before they’re even reviewed by human peers.

In GitHub pull requests: Every new pull request can be automatically scanned. Detected vulnerabilities appear as inline comments, complete with remediation suggestions.

Customisable rules: Developers and security teams can fine-tune rules to reduce false positives, focusing only on relevant, high-risk patterns.

Anthropic says Claude can already detect some of the most common — and damaging — security flaws in modern software, including:

SQL injection vulnerabilities

Cross-site scripting (XSS) issues

Authentication and authorisation weaknesses

Insecure data handling

Dependency vulnerabilities

The selling point is clear: “Catch security issues in your inner development loop, when they’re easiest and cheapest to fix.”

A Real-World Win Before Launch

Anthropic hasn’t just built this tool — they’ve dog-fooded it. According to the company, the new GitHub Action spotted a remote code execution vulnerability in an internal tool before it reached production.

The bug could have been exploited via DNS rebinding — a sophisticated web-based attack where a malicious site tricks a browser into treating it as a trusted domain. In less vigilant settings, such an issue could have been an expensive, embarrassing, or even legally significant security incident.

By catching it early, Anthropic not only avoided a potential breach but also proved the point: integrated AI security reviews can stop serious problems in their tracks.

Performance Benchmarks: Opus 4.1 Sets a New Bar

These security tools come alongside the release of Claude Opus 4.1, which has set a new state-of-the-art score on the SWE-Bench Verified benchmark.

For context, SWE-Bench Verified measures an AI model’s ability to fix real GitHub issues without introducing regressions. Claude Opus 4.1 scored 74.5%, a two-point improvement over Opus 4 and a noticeable lead in AI coding performance.

Key highlights from early adopters:

GitHub reports “particularly notable performance gains in multi-file code refactoring.”

Rakuten Group says Claude is now exceptionally good at pinpointing exact fixes in large codebases without breaking unrelated functionality.

Windsurf found a one standard deviation improvement over Opus 4 — roughly equivalent to the previous leap from Sonnet 3.7 to Sonnet 4.

The Bigger Picture: AI, Speed, and Security Tensions

AI-powered development is no longer fringe. Gartner projects that by 2028, 75% of software engineers will use AI coding assistants — up from under 10% in 2023. This transformation is being driven by:

Productivity gains — AI can scaffold features, refactor code, and write tests in minutes.

Talent shortages — AI helps smaller teams ship at a scale previously reserved for tech giants.

Competitive pressure — Firms that adopt AI tools early are outpacing slower rivals.

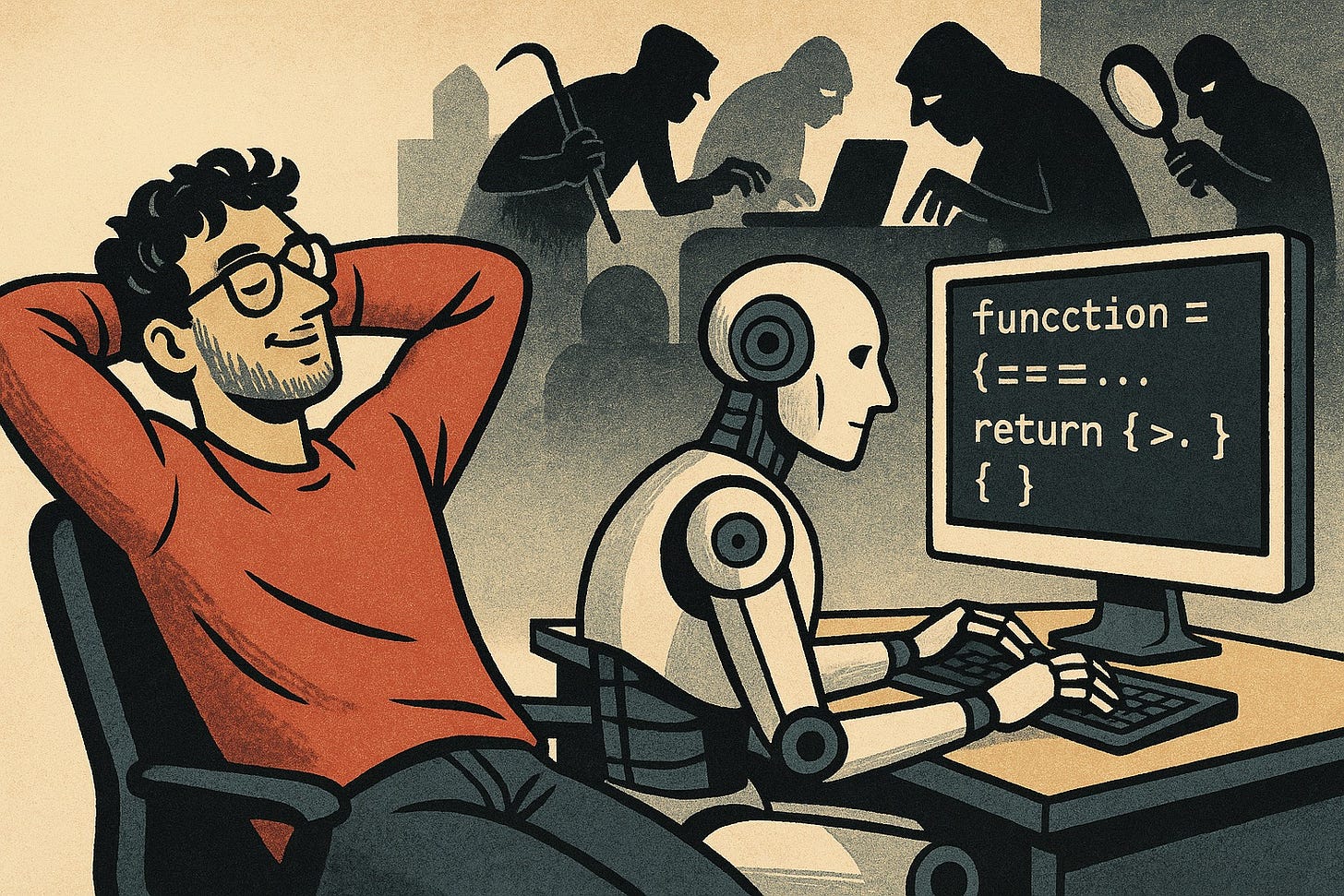

But this “AI acceleration” comes with a cost. Security researchers warn of “vibe coding” — a culture where developers rely heavily on AI without fully understanding or validating its output. While an AI may appear to produce elegant code, hidden vulnerabilities can slip through undetected — and into production.

Given the financial and reputational damage of breaches, AI-assisted development must evolve beyond raw productivity gains. Anthropic’s move suggests we’re entering a second phase of the AI-in-development story: “speed with guardrails.”

Why Anthropic’s Approach Stands Out

Anthropic’s value proposition here isn’t just that it’s adding security tools — it’s how it’s integrating them.

Inline, Continuous Security Checks By embedding vulnerability detection into GitHub pull requests, Anthropic aligns with Shift Left security principles — pushing checks earlier in the development lifecycle where fixes are faster and cheaper.

AI-Assisted Remediation, Not Just Detection Detection alone isn’t enough; developers need to understand how to fix the issue. Claude offers context-aware remediation suggestions, turning a static warning into an actionable solution.

Customisability for Real-World Teams False positives kill adoption. Anthropic’s inclusion of rule customisation acknowledges that enterprise teams have diverse codebases, risk profiles, and compliance requirements.

Dogfooding for Credibility Anthropic has used these tools internally and caught real vulnerabilities. This not only validates the product but also builds trust.

Pricing: No “Security Tax” for Now

One notable decision: pricing stays the same as Opus 4 — $15 per million input tokens and $75 per million output tokens. For enterprise teams already paying for Claude Code, these security features come as part of the package.

That’s unusual in the AI industry, where “enterprise” security features often carry premium price tags. It could be a strategic move to encourage adoption quickly, locking in market share before competitors follow suit.

Potential Enterprise Impact

For large organisations — especially those in finance, healthcare, defence, and critical infrastructure — this could be transformative.

Regulatory Alignment: Automated vulnerability scanning and remediation aligns with best practices under ISO/IEC 27001, NIST Secure Software Development Framework, and the OWASP Top 10.

Audit Trails: GitHub integration creates a verifiable log of security scans and remediation steps — useful for compliance and audits.

Reduced Mean Time to Remediation (MTTR): By surfacing vulnerabilities at the pull request stage, issues can be resolved in hours, not weeks.

Challenges and Questions Ahead

While Anthropic’s announcement is promising, there are open questions:

Accuracy: How often will these tools generate false positives or miss critical vulnerabilities?

Developer Trust: Will teams trust AI-based security scans enough to integrate them into CI/CD pipelines without parallel human review?

Vendor Lock-In: If companies deeply integrate Anthropic’s GitHub Actions, will switching providers later be difficult?

The market will likely provide answers over the coming year — but Anthropic has clearly staked its claim early.

Final Take: A Security-First Pivot for AI Coding Assistants

Anthropic’s release marks a subtle but significant shift in the AI development tool market. The narrative is moving from “AI makes coding faster” to “AI makes coding faster and safer.”

If adopted widely, these features could help mitigate one of the most serious risks of AI-assisted development: insecure code slipping quietly into production. Given the stakes — and the growing dependency on AI coding assistants — that might be the most important innovation in this space yet.