Can Anthropic Survive a $1 Trillion Lawsuit?

The AI giant chases a $150 billion valuation while facing massive legal exposure—and the UK and EU may be even riskier ground

As Anthropic eyes a $150 billion valuation in its next funding round, it finds itself at a historic crossroads—celebrated as one of the world’s most advanced AI labs while also confronting a class action lawsuit that could, in theory, sink the entire company.

At the centre of this drama is Claude, Anthropic’s large language model (LLM), trained on a massive corpus of text. According to US court filings, that training dataset includes up to seven million pirated books, sourced from illegal repositories like LibGen and PiLiMi. With damages under US copyright law capped at $150,000 per work, the theoretical risk tops $1 trillion.

But that’s not the full story. Even if Anthropic survives its legal challenge in the US, the bigger threat may lie across the Atlantic—where UK and EU law offer no “fair use” defence, and where governments are actively cracking down on unauthorised AI training practices.

A $150 Billion Valuation in the Works

In July 2025, reports emerged that Anthropic is in discussions to raise $3–5 billion in new capital. If successful, it would double its previous valuation of $61.5 billion and place it among the world’s most valuable private tech firms—surpassing SpaceX’s last private valuation and nearing OpenAI’s implied worth.

The company’s revenue is reportedly growing at breakneck speed, with estimates ranging from $1 billion to $4 billion annually, largely fuelled by enterprise adoption of Claude and partnerships with Amazon and Google. Middle Eastern sovereign wealth funds are said to be among the interested investors.

But as Anthropic pursues this funding, the legal clouds gathering over its data practices are hard to ignore.

The Class Action Copyright Case

In June 2025, US District Judge William Alsup handed down a pivotal decision. He ruled that Anthropic’s use of lawfully purchased books to train Claude was protected under “fair use”—a key legal shield for transformative, non-commercial uses under US copyright law.

However, the judge was clear that the alleged use of pirated books was not protected, and granted class action certification to proceed. That opens the floodgates for millions of authors and rightsholders to join the suit and claim statutory damages.

The books in question—many pulled from the Books3 dataset—include works from both living and deceased authors, and may span genres from fiction to technical manuals. Anthropic disputes ownership claims, and argues that AI outputs are sufficiently transformative. But the lawsuit will now go to trial, with potentially devastating financial exposure.

“If Anthropic used even a fraction of the Books3 dataset without permission, the damages could be staggering,” one IP lawyer told Publishers Weekly.

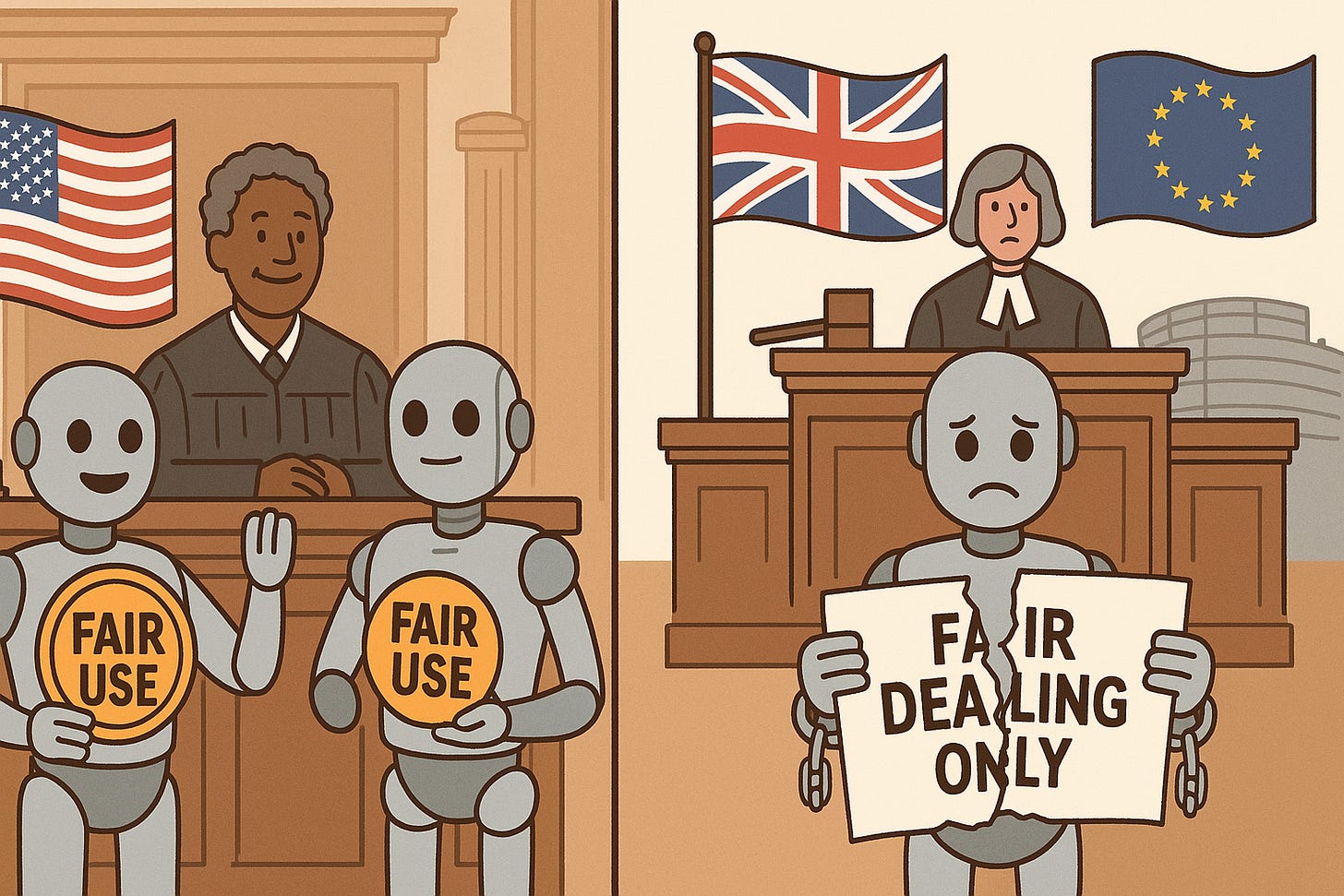

🇬🇧 UK and 🇪🇺 EU: Even Less Legal Protection

While the US has a flexible “fair use” doctrine, UK and EU copyright law is far stricter—and this spells trouble for AI companies.

UK: No Fair Use

UK copyright law only allows limited “fair dealing” exceptions—for criticism, research, or review. These exceptions do not extend to commercial AI model training.

The UK government is considering a proposed “opt-out” mechanism, which would allow creators to block use of their works via registration or metadata tags. If adopted, this could expose Anthropic and other firms to automatic infringement liability unless they can prove compliance.

Notably, the UK courts are already hearing high-profile AI copyright cases—including Getty Images v. Stability AI—and may soon set further precedent on AI model legality.

EU: Transparency, Opt-Out, and Enforcement

The EU’s Digital Single Market (DSM) Directive allows limited text and data mining (TDM) under Article 4, but only for research—not commercial LLM training. Crucially, rightsholders can “opt out”, and many already have.

With the EU AI Act (2025) now in force, developers of general-purpose AI models (like Claude) must:

Respect copyright laws and opt-outs

Publish a summary of training data sources

Demonstrate compliance upon request

This means that even if Anthropic survives its US case, deploying Claude in Europe could expose it to new infringement claims—especially if training datasets included opt-out works or pirated content.

In short: the legal risk is arguably higher in the UK and EU, where the lack of a fair use doctrine removes a key defence and new regulatory enforcement is just beginning.

Why This Case Matters for Everyone in AI

Anthropic isn’t alone. OpenAI, Meta, Google, and Mistral have all faced scrutiny over the sources of their training data. But Anthropic’s case is unique in its scale, its timing, and its potential to set global precedent.

Three Key Implications:

Licensing is now inevitable

The era of “scrape first, ask later” is ending. AI companies will need to secure direct licences from publishers, authors, and rights collectives, or restrict training to public domain and open-source data.

Transparency becomes enforceable

Under the EU AI Act and proposed UK reforms, companies must disclose how their models were trained. This turns what was once a black box into a regulatory minefield.

Investor risk is rising

A company with $4 billion in revenue but $1 trillion in potential legal liability is not just a unicorn—it’s a high-stakes gamble. Valuations may be adjusted to reflect litigation and compliance risk.

Final Thoughts

Anthropic is one of the brightest stars in the AI universe—but it is also the most visible test case for what happens when innovation collides with copyright law.

If it succeeds in raising at a $150 billion valuation while fending off a historic lawsuit, it will reset the rules of engagement in the AI sector. But if it fails—if courts rule that pirated content poisoned the model’s dataset—it could force an industry-wide reckoning.

For UK and EU regulators, this is a moment of leverage. For authors and artists, it’s a chance to reclaim control. And for AI companies, it’s a reminder: no matter how smart your model is, the law still matters.