18 Months to Sovereignty

Why the EU AI Act Delay Forces a UK Choice

Why it matters: The EU just handed UK organisations an 18-month window before high-risk AI rules take effect. This isn't compliance relief — it's a strategic inflection point. With the UK government silent on dedicated AI legislation, British businesses must decide alone whether to align with Brussels or chart a distinctively sovereign path.

Join The Control Layer for weekly perspectives on AI, cybersecurity, and building technology that serves human purpose.

The delay nobody expected

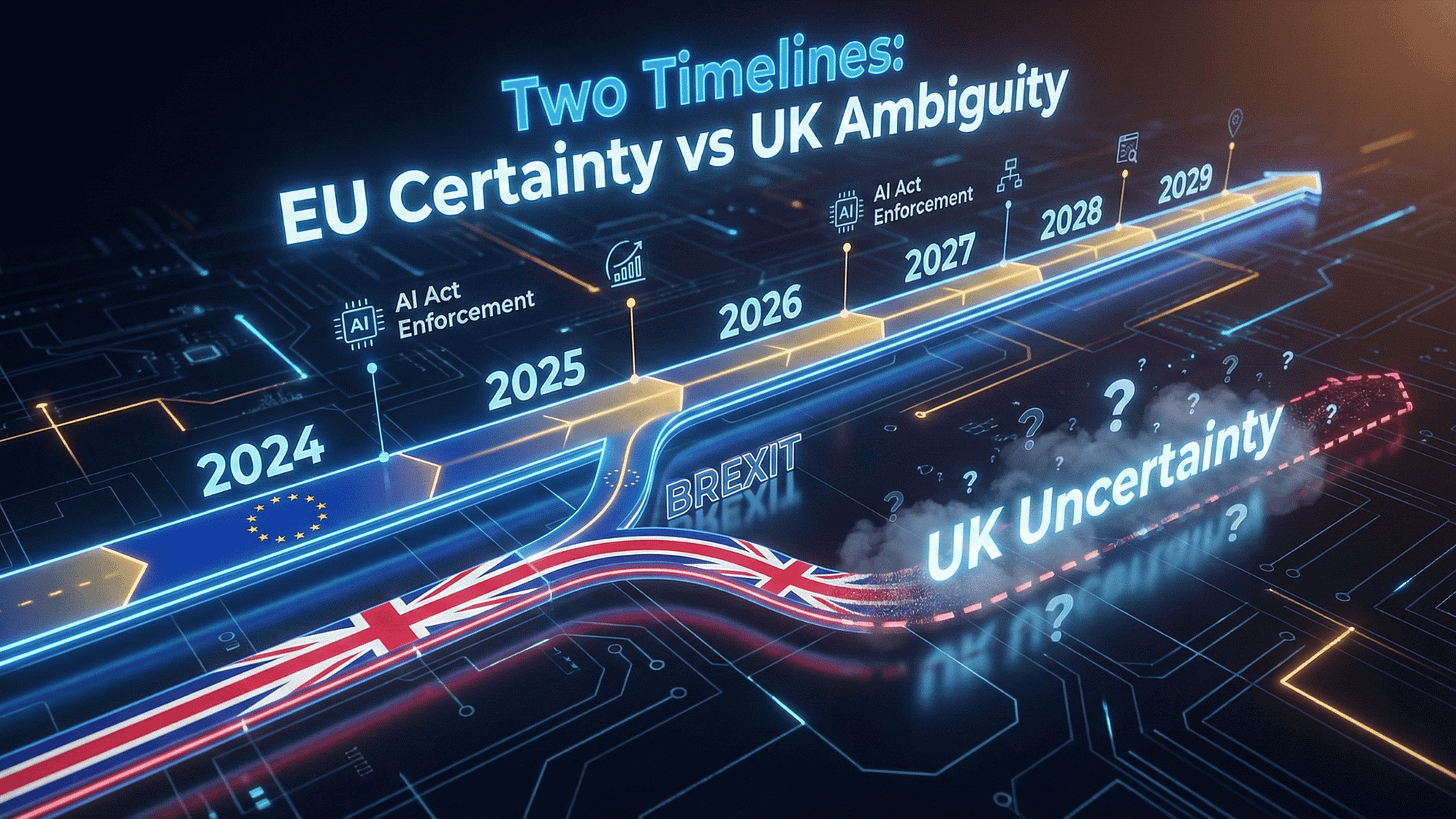

Last month, the European Commission proposed something remarkable: pushing back the EU AI Act’s most demanding requirements by more than a year. High-risk AI rules originally due in August 2026 now target December 2027. Systems covered under Annex I face enforcement from August 2028, not 2027.[6]

For organisations that spent 2024 and 2025 scrambling to understand compliance obligations, this feels like an unexpected reprieve. The Brussels machinery, usually relentless in its regulatory march, has effectively admitted it needs more time to develop the technical standards and implementation guidance that make the Act workable.

But reprieve implies you were on a fixed path. UK organisations face a more interesting question: which path are we actually on?

Britain’s curious silence

Here is where the story gets properly British — meaning awkward and unresolved.

The UK government’s response to its own copyright and AI consultation is now due 18 March 2026.[5] That consultation revealed a striking disconnect: only 3% of respondents supported the government’s preferred approach of allowing AI training on copyrighted works with an opt-out mechanism. The majority favoured mandatory licensing. The government has delayed its response twice already.

More telling is what hasn’t happened. Despite months of speculation, no dedicated AI Bill appeared in 2025. AI minister Kanishka Narayan has stated that existing frameworks — data protection, competition law, equality legislation, online safety rules — already apply to AI systems.[5]

The message is clear: we’re not building new regulatory architecture. We’re retrofitting what we have.

A dedicated UK AI Bill is now expected in the second half of 2026 at the earliest. Industry observers consider even that timeline uncertain.[5]

This creates a peculiar situation. The EU is delaying enforcement but accelerating standard-setting — voluntary codes of practice, technical standards committees, implementation guidance flowing from Brussels throughout 2026. The UK is doing neither. No enforcement timeline to slip. No standards to develop. Just silence.

The vacuum is the strategy

Let me be direct about what this means for UK organisations.

You are not waiting for regulatory clarity. You are operating in a deliberate vacuum. The government has made a calculated bet that sectoral regulators — the FCA, Ofcom, the ICO, the CMA — can handle AI governance through existing powers without primary legislation. Whether that bet pays off depends entirely on how those regulators interpret their mandates.

For organisations serving European markets, the calculus is straightforward. EU AI Act compliance remains necessary regardless of UK domestic policy. The delay simply extends your preparation runway. Build frameworks now, refine through 2026, implement ahead of December 2027 enforcement.

But for organisations primarily serving UK markets — defence contractors, public sector suppliers, domestic financial services, healthcare providers — the question becomes genuinely strategic. Do you:

Option A: Build EU-aligned frameworks anyway. Hedge against potential future UK alignment. Maintain optionality for European market expansion. Accept higher compliance costs now for reduced risk later.

Option B: Build fit-for-purpose UK frameworks. Optimise for current UK regulatory expectations. Reduce compliance overhead. Accept the risk that future UK legislation could require significant rework.

Option C: Wait and see. Defer material investment until the UK position clarifies. Minimise near-term costs. Accept concentrated implementation risk in late 2026 or 2027.

None of these options is obviously correct. That’s rather the point.

What the EU delay actually signals

The Commission’s Digital Omnibus proposal isn’t simply about buying time. Read carefully, it reveals where European regulators expect the hard problems to emerge.

The delay to December 2027 for high-risk rules under Article 6(2) and Annex III acknowledges that technical standards aren’t ready. The European standardisation bodies — CEN, CENELEC, ETSI — need another year to produce guidance that makes compliance assessable.[6]

More interesting is what isn’t delayed. AI-generated content labelling obligations under Article 50 remain targeted for February 2027 for systems predating August 2026.[6] The transparency requirements that matter most for public trust stay on track.

The proposal also abolishes the general AI literacy obligation under Article 4, keeping only specific training requirements for deployers of high-risk systems.[6] Brussels is prioritising depth over breadth — focusing enforcement resources on genuinely high-risk applications rather than attempting universal uplift.

And there’s a notable expansion: processing of special category data for bias detection and correction now applies to all AI systems, not only high-risk ones, subject to strict safeguards.[6] The EU is acknowledging that responsible AI development requires access to sensitive data categories, provided appropriate protections exist.

These details matter because they reveal regulatory intent. The EU isn’t backing away from AI governance. It’s refining the approach based on implementation reality.

The UK’s accidental experiment

Here’s a thought that keeps me up at night, in the way only regulatory philosophy can.

The UK is running an uncontrolled experiment in AI governance. By declining to legislate while the EU extends its timeline, we’re creating a natural comparison. Does sectoral regulation through existing frameworks produce better outcomes than comprehensive primary legislation? We’ll find out.

The optimistic reading: UK regulators, freed from prescriptive legislation, can respond flexibly to specific sectoral risks. The ICO addresses AI privacy concerns through GDPR enforcement. The FCA handles financial services AI through existing conduct rules. The CMA examines AI market concentration through competition powers. Each regulator applies domain expertise without the rigidity of a unified AI Act.

The pessimistic reading: without clear primary legislation, UK organisations face fragmented, unpredictable enforcement. Different regulators apply different standards. Gaps emerge between sectoral boundaries. International partners question UK AI governance credibility. Equivalence discussions with the EU become complicated.

Both readings contain truth. The outcome depends on execution — by regulators and by organisations.

What boards should actually do

Let me translate this into actionable guidance, because strategic ambiguity only goes so far.

For boards with EU market exposure:

The December 2027 deadline should anchor your planning. Begin compliance assessment now. Use 2026 to develop classification methodologies, risk management frameworks, and documentation practices. The delay is runway, not relief.

Watch the voluntary code of practice on AI-generated content marking. The second draft arrives March 2026, final code June 2026.[6] Early adoption signals governance maturity to European partners and customers.

For boards serving primarily UK markets:

Map your AI systems against existing UK regulatory frameworks. Identify which sectoral regulators have jurisdiction. Engage proactively — the regulators developing their approaches now will welcome industry input.

Build governance frameworks that work regardless of future legislation. Focus on demonstrable accountability, documented decision-making, and measurable outcomes. Good governance doesn’t require specific regulation.

For all boards:

Don’t conflate regulatory delay with reduced risk. The operational, reputational, and ethical risks from AI systems exist independent of enforcement timelines. Governance frameworks should address actual risk, not compliance calendars.

Use the 18-month window to build institutional capability. Train your teams. Develop assessment methodologies. Create documentation practices. When enforcement eventually arrives — whether from Brussels, Westminster, or both — organisations with mature governance will adapt faster than those starting from scratch.

Analysis: the sovereignty question nobody’s asking

Here’s my honest assessment of where this leads.

The UK’s silence on AI legislation isn’t strategic ambiguity. It’s strategic avoidance.

The government has decided that AI governance is too politically contested, technically complex, and economically uncertain to address through primary legislation in this Parliament. The “pro-innovation” framing provides cover for inaction.

This might work. Sectoral regulation might prove sufficiently flexible and robust. UK AI governance might emerge as a genuine alternative to the EU’s comprehensive approach — lighter touch, more adaptive, better suited to fast-moving technology.

But it might not. The EU is building institutional infrastructure for AI governance: technical standards, certification bodies, enforcement mechanisms, legal precedent. The UK is building none of this. If EU AI Act equivalence becomes a requirement for data flows or market access — not impossible given the trajectory of EU digital policy — the UK will need to construct regulatory architecture rapidly from a standing start.

The 18 months the EU has granted itself is also 18 months for UK policy to clarify.

I would bet significant money that it won’t. British organisations should plan accordingly.

Disclaimer: This article represents analysis based on publicly available information as of January 2026. It does not constitute legal, financial, or professional advice. Organisations should consult qualified advisors for specific compliance guidance.

If your organisation needs support navigating AI governance frameworks — whether aligned with EU requirements or tailored for UK markets — Arkava helps mid-market enterprises turn regulatory uncertainty into strategic advantage.